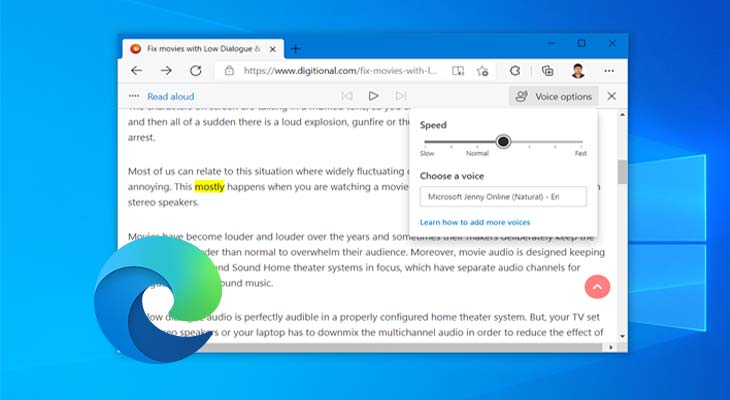

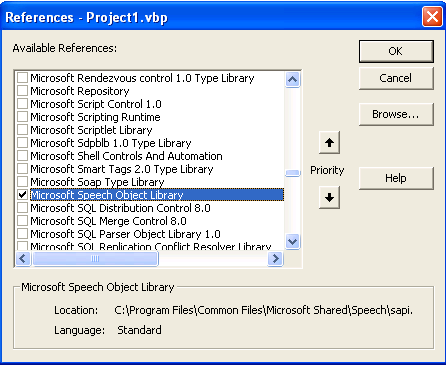

We use Google Analytics to understand how the site is being used in order to improve your user experience. This information is collected by major web servers by default. This information includes information such as your computer’s Internet Protocol (“IP”) address, browser user-agent and the time and date of your visit. Press the microphone button on the voice typing menu. Say a voice typing command like 'Stop listening'. This winform has one checkbox for speech on/off and performs continuous speech recognition until I close the window. Press the microphone key next to the Spacebar on the touch keyboard. Part of Microsoft Azure Collective 0 I have this c winform which uses azure speech to text for converting speech into text. We want to inform you that whenever you use this service, we collect information that your browser sends to us. Press Windows logo key + H on a hardware keyboard. This section is used to inform website visitors regarding policies with the collection, use, and disclosure of Personal Information if anyone decided to use this service. Audio is a data type that matters for companies. In this context, Azure Search is the standard Microsoft Knowledge Mining service, that uses AI to create metadata about images, relational databases, and textual data, providing a web-like search experience. To save generated audio, right click on audio player and press "Save audio as." The acoustic and language models in the Microsoft Speech-To-Text engine have been trained on an enormous collection of speech and text and provide state-of-the-art performance for the most common usage scenarios, such as interacting with Cortana on your smart phone, tablet or PC, searching the web by voice or dictating text messages to a friend. Knowledge Mining is a technique to extract insights from structured and unstructured data.For example, you can dictate text to fill out online forms or you can dictate text to a word-processing program, such as WordPad, to type a letter. It should be done nearly instantly, as the interface tries to generate audio at x16777215 real-time. This code story delves into our Fortis solution by providing examples of Spark Streaming custom receivers needed to consume Azure Cognitive Service’s speech-to-text API and showing how to integrate with Azure Cognitive Service’s speech-to-text protocol. Dictate text using Speech Recognition - Microsoft Support Dictate text using Speech Recognition Windows 7 You can use your voice to dictate text to your Windows PC. Add a path to the SAPI library file by selecting the Library Files drop-down menu and adding 'C:\Program Files\Microsoft Speech SDK 5.3\Lib\i386'. 'C:\Program Files\Microsoft Speech SDK 5.3\Include'. Wait for generated audio appear in audio player. Add the path by clicking in the first unused line in the paths list and enter.

Azure-Samples Cognitive-Speech-TTS master 8 branches 104 tags szhaomsft Update README.

All voices have lower and upper pitch and speed limits. GitHub - Azure-Samples/Cognitive-Speech-TTS: Microsoft Text-to-Speech API sample code in several languages, part of Cognitive Services. Note that BonziBUDDY voice is actually an "Adult Male #2" with a specific pitch and speed. It is theoretical possible to 'wait for the keyword' with the SDK, more suited for this are dedicated 'keyword spotter', perhaps even with low power support! We plan to make something like this available in a future version (but no ETA yet).Microsoft Sam TTS Generator is an online interface for part of Microsoft Speech API 4.0 which was released in 1998. 1 contributor Feedback In this article Real-time speech to text Batch transcription Custom Speech Responsible AI Next steps In this overview, you learn about the benefits and capabilities of the speech to text feature of the Speech service, which is part of Azure AI services. To dictate text Open Speech Recognition by clicking the Start button, clicking All Programs, clicking Accessories, clicking Ease of Access, and then clicking Windows Speech Recognition. The scenario for the SDK is that you transcribe an audio stream to text (more the scenario: press a button and start speaking).It is not necessary the scenario to wait for a keyword, and start transcribing from that point on. When you speak into the microphone, Windows Speech Recognition converts your spoken words into text that appears on your screen. So that i can process the data only after a keyword. This is explained in the docs as well as demonstrated in the samples.įor the latest set of samples, check out our Īn additional comment regarding this statement:

You have to 'listen' to speech events to receive the speech recognition results from the speech endpoint.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed